Transform CX with AI at the core of every interaction

Unify fragmented interactions across 30+ voice, social and digital channels with an AI-native customer experience platform. Deliver consistent, extraordinary brand experiences at scale.

Step-by-Step Guide on Building Agentic AI for Business

Key takeaways

- Agentic AI moves beyond replies to executing tasks across tools, while keeping outcomes verifiable and accountable.

- Production agents rely on structure, not just model capability. Reasoning, tools, case state, policy boundaries and orchestration must work together to keep actions controlled and consistent.

- Start small with workflows that are clear, repeatable and low-risk. Choose use cases with defined outcomes, stable rules and reliable system access to build confidence early.

- Autonomy is introduced gradually through design, not assumed upfront. Begin with assist or hybrid modes, validate behavior, and expand scope only when decisions remain predictable and explainable.

- Testing and review are what make agents deployable, not demos. Use offline testing, shadow mode and controlled rollout to understand behavior before scaling real-world impact.

You may already be past curiosity that comes with agentic systems. Models can reason. Tools can execute. Almost everything that can be automated technically already is.

What slows teams down now is a different problem: building an agentic AI that turns business intent into action without losing control, context, or accountability along the way.

That’s why how to build agentic AI has become a design question more than a technology one. You’re no longer choosing between bots and humans. You’re deciding how tasks that previously required human attention at every step move through real systems, how policy shapes each decision along the way, and how responsibility is preserved with the right balance of automation and oversight.

If you’re searching for how to build an agentic AI for customer experience, this guide is a set of grounded choices that help you build something you can deploy without holding your breath.

- Agentic AI vs “Just an LLM”: Why the build is different

- Before you build: Pick one workflow worth automating

- Blueprint: The minimum architecture of a production agent

- Step-by-step: Building your first agentic AI agent

- Common mistakes teams make when building their first agent

- How to build an agentic AI that outperforms at customer experience

Agentic AI vs “Just an LLM”: Why the build is different

A single LLM call is great at producing an answer. An agentic system is built to produce an outcome.

That shift changes what you must build around the model.

- With an LLM assistant, the output is usually text: guidance, a draft reply, a summary.

- With an agent, the output is a sequence of actions: read data from a system, apply policy, make a change, confirm it happened, log it, notify the customer.

So, the moment a system is allowed to act, you need to think about things like:

- what systems it can touch

- what it is allowed to change

- how you know what it did

- how you recover when something fails

This is where teams get burned: if you treat “how to build agentic AI” like “how to prompt a chatbot,” you end up with something that looks impressive in a demo and fragile in production. Production agents require controls: approvals, safe stopping points, and logs that can explain what happened. In practice, autonomy is never a starting point. It is earned through consistent behavior, clear boundaries, and the ability to explain every action taken.

Before you build: Pick one workflow worth automating

This is where most agentic AI efforts quietly fail.

Teams get excited about the capability and try to automate something large, sensitive, or poorly defined. The agent then exposes every ambiguity in policy, ownership, and system access all at once.

The teams that succeed start smaller. Much smaller.

What makes a strong first workflow

A good starting workflow has four qualities.

- It happens often.

You want something your team sees every day. Repetition gives you learning quickly. - You can clearly tell when it’s done.

“Password reset completed.” “Plan changed.” “Address updated.” If success is subjective, the agent has nothing solid to aim for. - The rules are written down and mostly stable.

Not perfect. Just clear enough that two humans would usually make the same call. - The systems already support the action.

An agent can only do what your systems allow it to do safely. If today the work requires agents to jump between screens, copy values, or ask for approval in chat, the agent has nothing reliable to trigger. In that case, the work isn’t “build the agent” yet. It’s first turning those manual steps into clear system actions that return a definite result.

Strong starting points to build agentic AI in CX

These are typical “first agent” candidates because outcomes are crisp and risk can be constrained:

- password resets and account recovery flows with strict identity checks

- address changes with validation and confirmation

- order status and delivery updates that are read-heavy

- low-risk refunds with strict limits and clear eligibility rules

- case triage and after-contact work that writes notes, tags, and routing decisions

Avoid these as your first build

- high-stakes decisions with major customer harm if wrong (credit, medical, legal)

- workflows with unresolved ownership or conflicting policies

- actions with no reliable tool access or no way to confirm completion

A simple selection scorecard

Use a basic scorecard: volume, completion clarity, policy clarity, risk, data readiness, tool readiness. Pick the workflow that scores well across most dimensions, then reduce risk further by putting hard limits on what the agent can do in v1.

Blueprint: The minimum architecture of a production agent

A production agent is less “an LLM with tools” and more “a controlled workflow runner that happens to use an LLM.”

If you’re building for CX, five parts matter.

1. Reasoning layer

This is the part that interprets the request, plans a next step, and explains the outcome in plain language.

You may not need to pick a specific model in this article. What matters is whether the model can:

- follow instructions reliably

- use tools through structured requests

- stay consistent under edge cases

- produce explanations that can be logged and reviewed

2. Tools

Tools are how the agent interacts with your business.

A tool might retrieve a customer profile, submit a billing change, or log a case note. Each tool should have one clear responsibility and predictable behavior. If something goes wrong, it should fail clearly, not vaguely.

This matters because tools define the agent’s power. If a tool can change money or access sensitive data, that power must be tightly controlled.

3. Case tracking (what engineers call “state”)

The agent needs a running record of the case so it doesn’t lose its place or repeat work.

In practice, this includes things like:

- what the customer asked for

- which checks have already been completed

- which actions have already been taken

- what remains to be done

This matters because production flows get interrupted. Systems time out, customers switch channels, and humans step in. Without this record, agents repeat actions or make conflicting changes.

A key principle is separation of responsibility.

Customer data stays in systems of record that already enforce access, retention, and audit rules. The agent carries only minimal case state—what has happened and what comes next—and refers back to those systems when data is required.

4. Policy and Approvals

Once an agent can change records or move money, boundaries become essential.

Policy defines:

- what actions are allowed autonomously

- what actions are blocked

- what actions require approval

For example:

- refunds above a certain amount pause for review

- enterprise accounts route automatically to specialists

- identity must be verified before any billing change

These boundaries often already exist in practice. The difference that comes with an AI agent is that they need to be explicit and enforced by your system, not inferred by the model on which the agent runs.

This is what allows teams to introduce autonomy gradually. You start with narrow limits and widen them deliberately as confidence grows.

5. Orchestration and logs

Orchestration is the control layer that:

- decides what happens next

- enforces timeouts and retries

- stops loops

- captures an audit trail of what the agent tried, what it did, and what happened

Two production concepts become very important here because they prevent the most painful failures:

Idempotency: do it once, even if you retry

If a refund request is retried, the system must not submit a second refund. Tools often support this through unique request IDs or action keys.

Compensation: recover when later steps fail

If step three succeeded and step four failed, you need a defined recovery path: void, reverse, reopen, or hand off with a precise status. This is less about elegance and more about not losing track of money and customer promises.

Step-by-step: Building your first agentic AI agent

To make this concrete, consider an agent that handles subscription downgrades and low-risk refunds.

Step 1: Write the job spec (not a model prompt)

Most teams start by describing what they want the agent to do. The better starting point is describing what the agent is allowed to do, and what it must never do.

Write the job as if a human will be held responsible for it, because someone will be.

A good job definition includes:

- What the agent handles: subscription downgrades, simple cancellations, refunds under a set amount.

- What “done” looks like: the plan change is recorded in the billing system, the CRM is updated, and the customer is notified with accurate details.

- Hard boundaries: no changes to enterprise or contract accounts, no refund beyond a limit, no action without identity verification.

- Stop conditions: disputes, fraud flags, missing records, policy ambiguity, or anything that forces judgment.

This makes reviews easier because people can react to clear boundaries. Legal, security, and frontline leaders can’t sign off on “be helpful.” They can sign off on “you may do X under Y conditions, and you must stop under Z conditions.”

Step 2: map success and failure paths

A workflow diagram usually shows the happy path. Your customers live in the failure path.

So map both.

Happy path: downgrade

- verify the customer

- retrieve the current subscription details

- check eligibility for change

- confirm what will happen and when

- submit the change in billing

- confirm the change took effect

- log the case and notify the customer

Now map the moments that create real risk:

- Identity can’t be verified. The system should stop immediately, not “try anyway.”

- The account has a contract lock or a dispute. The agent must explain what it can do inside policy and route the rest.

- Billing accepts the request but doesn’t apply it instantly. The agent must not tell the customer it’s done until it can confirm.

- An upstream system is down. The agent needs a safe retry limit and a clean handoff.

- The customer pivots mid-flow. “Actually cancel it” changes everything. The agent needs to re-check policy and limits.

These are not edge cases in CX. These are Tuesday.

Step 3: define a small set of tools

Tools are how the agent touches your business. Without them, it can only talk.

For a first agent, keep the tool set small, but make each tool clear enough that a non-engineer can understand what it does and why it exists. You’re trying to create predictability, not breadth.

A workable starter set:

- Get customer and subscription details

Inputs: customer identifier. Output: current plan, account status, contract flags, dispute flags. - Check eligibility for downgrade or refund

Inputs: account status, policy version, requested action. Output: allowed or blocked, plus the reason. - Submit subscription change

Inputs: customer, target plan, effective date, request reference. Output: confirmation that billing accepted the change, plus current status. - Log a case note

Inputs: case ID and a structured summary. Output: a record reference. - Send customer notification

Inputs: template and variables. Output: delivery status.

Two things matter here that most drafts skip because they sound “too technical,” but they’re exactly where CX risk hides:

- Confirmation: every write action must have a way to verify what happened.

- Do-once behavior: a retry must not create duplicate changes. If a refund or plan change is attempted twice, it should be detected and blocked at the tool layer.

You don’t need to describe how engineering implements that in this guide. You do need to state that it must exist, because it protects customers and protects the business.

Step 4: decide how the agent moves forward

This is where teams often over-trust the model. They let it “figure it out.” In CX, that becomes unpredictable behavior the first time systems disagree or policy conflicts.

A safer approach is to make the agent’s decision logic legible.

At each step, the agent should be able to answer:

- What is the customer asking for, in one sentence?

- What do I already know, and what must I confirm before acting?

- Is the next action allowed under policy, or does it require review?

- If I take this action, how will I confirm it worked?

- If I cannot confirm, what is the safe next move?

This keeps the agent from drifting into improvisation. The model can still help interpret intent and choose the next step, but the system is not relying on intuition to manage risk.

Step 5: design the handoff so humans can continue without rework

In practice, handoffs fail because they force the human to reconstruct context.

So instead of thinking “handoff packet,” think: If I’m the agent receiving this case, what would I need in front of me to finish it without repeating steps?

A useful handoff includes:

· What the customer requested, and what channel it came from

· What checks were completed, including identity status

· What tools were used, and what each one returned

· What actions were taken, with confirmation status

· What remains, and why the agent stopped

The goal is simple: the human should not have to re-ask the customer the same questions or re-run the same checks unless there’s a clear reason.

Step 6: choose how the agent shows up

The launch mode decides how accountability feels on day one.

1. Assist mode: the agent drafts the plan and the human decides whether to execute. This is where many teams start because it builds trust without forcing the organization to accept autonomy immediately.

2. Hybrid mode: the agent completes safe steps on its own, then pauses at defined decision points. This works well when you want speed, but you also want a person in the loop for money movement, identity-sensitive actions, or policy gray areas.

3. Autonomous mode: the agent completes the workflow end-to-end inside strict limits. This is earned, not declared. It follows repeated evidence that the agent can operate within limits, recover from failures, and leave behind decisions that teams can trust. It tends to work best when the workflow has clear rules, high consistency, and strong confirmation mechanisms.

Today, the real decision is not whether automation is possible, but where accountability should sit. Launch modes let you answer that with design, not hope.

Step 7: test before you trust

Before exposing the agent to real customers, you need to see how it behaves when things are imperfect.

Start with offline testing using real conversation transcripts and edge cases. Look for where the agent makes incorrect assumptions, skips validation steps, or takes actions it cannot confirm.

Then move to shadow mode. Let the agent run in parallel without taking action, while humans handle the workflow. Compare decisions. Where does the agent agree, and where does it diverge?

If possible, introduce a small A/B slice where the agent handles a controlled percentage of traffic under strict limits.

Step 8: run a controlled rollout with a review habit

Don’t judge an agent by its best day. Judge it by how it behaves when systems are slow, customers are unclear, and policy creates friction.

Start with a narrow slice of traffic. Review it weekly. Expand only when the behavior looks consistent and explainable.

What you may look at in reviews:

- where the agent stops and why

- where it takes action and how often it can confirm success

- where failures cluster by system or policy

- where humans disagree with the agent’s plan

- where customers get confused by the communication

- This keeps the agentic AI system you’re building, grounded.

Common mistakes teams make when building their first agent

Most agent initiatives stall quietly. Trust thins out. Reviews get tense. People start saying, “It works… but.” That “but” usually traces back to design choices made early, when momentum was high and constraints felt negotiable.

Here are the patterns that surface when you build an agentic AI as organizational friction.

1. Treating the model as the product

It’s easy to anchor on the reasoning layer because that’s the visible part. You see intelligence and fluent responses. It feels like a breakthrough.

But in production, the model is only one piece of the system. The product is the workflow — the tools it can use, the boundaries it must respect, the confirmations it requires, and the logs it produces.

When teams over-index on the model, they tend to let it “decide” policy questions implicitly. That’s when behavior starts to go astray. The agent may technically complete the task, but it does so in ways that feel inconsistent with how the business operates.

The correction is simple: move policy and limits out of inference and into explicit system rules. Let the model interpret intent. Let the system enforce boundaries.

2. Expanding scope before stability

After the first few successful cases, momentum builds. If the agent can handle downgrades, why not upgrades? If it can issue small refunds, why not larger ones? If it works in one region, why not all?

The pressure to scale often outpaces the habit of review.

The result is often subtle degradation. More edge cases slip through. Logs become harder to interpret. Review cycles lengthen. Frontline teams start second-guessing outcomes.

A steadier approach is to expand laterally before expanding vertically.

Add adjacent workflows that use the same tools and policy logic. Prove consistency. Then widen boundaries. Autonomy that grows from observed behavior is easier to defend than autonomy declared in advance.

3. Designing handoff as an afterthought

Handoff is usually imagined as a fallback. In reality, it’s a core feature.

When handoff is poorly designed, humans inherit confusion. They re-verify identity, re-check eligibility and re-run steps because they’re unsure what the agent actually did. That erodes both efficiency and trust.

Strong handoff design treats the human as a continuation of the workflow. The agent should leave the case in a state that feels orderly: here’s what was asked, here’s what was checked, here’s what changed, here’s what remains.

If humans feel they’re cleaning up after the agent instead of collaborating with it, the program won’t scale.

4. Leaving ownership ambiguous

An agent that touches billing, CRM, and support workflows will inevitably cross team boundaries. Without a clear owner, decisions stall. Policy updates lag. Review findings linger unresolved.

Ownership here will need one person accountable for scope, behavior, and performance. They decide when the agent’s boundaries expand. They decide when a rollback is necessary. They answer for outcomes.

Without that role, the agent becomes “everyone’s project,” which is often another way of saying it’s no one’s responsibility.

5. Chasing edge-case perfection

There’s a moment in most builds where the long tail becomes tempting. The rare exceptions start to dominate planning discussions. You begin designing for the 5% of cases that behave unpredictably.

That instinct is understandable. No one wants visible failure.

But over-optimizing for the rare case can slow down the common one. The first goal of an agent is to handle the consistent, well-defined majority reliably. The long tail can remain routed to specialists, with context provided.

Trying to automate everything at once often results in a system that feels complex and brittle, rather than focused and dependable.

Now, what ties these mistakes together is misplaced emphasis.

How to build an agentic AI that outperforms at customer experience

Agentic systems succeed when teams focus on clarity: clear boundaries, clear ownership, clear confirmation of actions, and clear review cycles. When those are in place, autonomy becomes something that can grow naturally.

If you’re thinking about building your first agent, there’s probably a mix of momentum and hesitation sitting together.

You can see the efficiency. You can see the scale. You can also see the risk of letting software move inside systems that customers depend on. That tension is healthy. It means you understand what’s at stake.

Building an agent asks you to formalize what your teams already do instinctively — apply rules, verify identity, confirm outcomes, escalate when unsure. The difference is that those instincts now need to live inside a system.

Teams that succeed approach this with patience. They define the workflow clearly. They enforce boundaries early. They review behavior before expanding it.

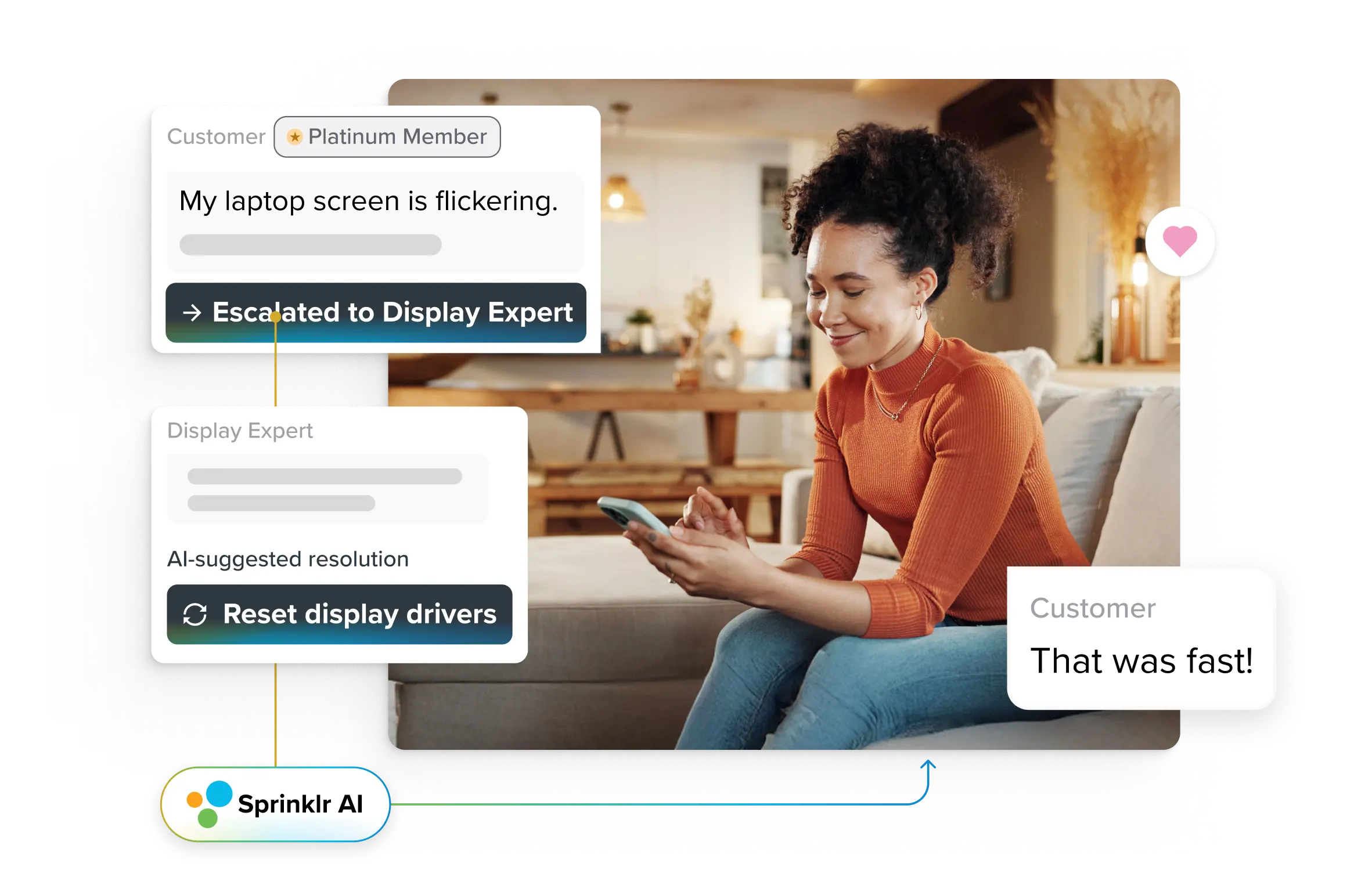

That’s the philosophy behind platforms like Sprinklr AI Agents. They’re built around structured workflows, policy boundaries, and full visibility across your CX stack, so the discipline you design on paper actually holds in production.

Build Agentic AI with Sprinklr

Your go-to resources for the agentic revolution

- What is Agentic RAG? Human Feedback, Use-Cases, Metrics

- What Are Multi-Agent AI Systems? Use Cases, Benefits & the Future of Customer Support

- Agentic AI Workflows: What to Expect, Benefits, and Challenges

- 5 Real-World Agentic AI Use Cases for Enterprises

- How AI Agents Are Streamlining Workflows Across Teams

Frequently Asked Questions

Readiness is less about technology maturity and more about operational clarity.

You are likely ready if you can clearly answer three questions:

- Do we have at least one workflow with a defined start and finish?

- Are the rules governing that workflow written down and agreed upon?

- Do our systems allow safe, programmatic access to the data and actions required?

If the workflow is vague, the policy is inconsistent, or system access is manual, the first step is alignment, not automation.

Readiness also includes cultural readiness. Teams must be willing to review outcomes, refine boundaries, and treat the agent as an evolving capability rather than a one-time deployment.

You don’t need a research lab. You need cross-functional fluency.

At minimum:

- Product ownership: someone who can translate business workflows into structured, testable definitions of success and boundaries.

- Engineering capability: experience with APIs, system integration, and failure handling. Agents touch real systems, and reliability matters.

- Operational insight: people who understand how the workflow behaves under pressure, including edge cases and escalation patterns.

- Governance awareness: someone who can align automation decisions with compliance, privacy, and risk expectations.

The most successful teams treat agent development as a product discipline with technical execution, not as an isolated AI experiment.

Before building anything, gather context.

You should have:

- Clear documentation of how humans complete the workflow today.

- Examples of typical and atypical cases.

- Defined policy rules, including limits and approval requirements.

- Historical data showing volume, resolution patterns, and common failure points.

- A map of which systems the workflow reads from and writes to.

This preparation does two things. It prevents surprises during implementation, and it reveals where ambiguity exists. Agents struggle in gray areas. Clarity up front reduces friction later.

In regulated environments, guardrails are not optional layers. They are structural components.

At a minimum, regulated teams should ensure:

- Explicit action limits: hard boundaries on financial changes or data exposure.

- Role-based access controls: agents can only access what is necessary for the task.

- Audit trails: every action is logged with timestamp, context, and outcome.

- Human approval workflows: defined checkpoints for higher-risk actions.

- Recovery mechanisms: the ability to reverse or escalate when a problem is detected.

- Periodic review: regular audits of agent behavior and boundary adherence.

Regulation does not prevent agent adoption. It shapes how autonomy is introduced and monitored.

Scaling is less about adding more agents and more about strengthening the system that supports them.

The first agent teaches you how boundaries behave in practice. The second and third should reuse what you learned: tool definitions, policy structures, logging standards, and review processes.

To scale responsibly:

- Expand into workflows that share tools and governance logic before branching into entirely new domains.

- Centralize visibility so leadership can see performance across agents in one place.

- Maintain a clear owner for each workflow, even as infrastructure is shared.

- Increase autonomy gradually, based on observed stability rather than roadmap ambition.

When scaling is treated as a structured extension rather than a multiplication exercise, agents remain manageable.