Transform CX with AI at the core of every interaction

Unify fragmented interactions across 30+ voice, social and digital channels with an AI-native customer experience platform. Deliver consistent, extraordinary brand experiences at scale.

The Future of Agentic AI: What Enterprises Must Prepare for Next

Enterprises are moving fast toward agentic AI, and the urgency is rational. After years of copilots, assistants, and workflow automations that still required human orchestration, agentic systems promise something fundamentally different: software that can plan, decide, and act across systems with minimal supervision. For organizations under pressure to do more with flatter teams and tighter margins, that promise is hard to ignore.

The result is a familiar pattern. Proofs of concept are everywhere. Agent frameworks are being tested in sandboxes. Functional teams are launching pilots across the supply chain, finance, IT operations, and customer service. Boardrooms are hearing "agentic" with increasing frequency, often framed as the next inevitable platform shift after cloud and generative AI.

What is missing from most of these conversations is a clear view of what happens next.

Deploying an agentic AI pilot is not the hard part. The harder question is what the future of agentic AI looks like once agents move beyond demos and begin operating inside real enterprise environments with real constraints. Data fragmentation, policy enforcement, auditability, failure handling, and human accountability do not disappear when an agent can reason and act. In many cases, they intensify.

This is the inflection point enterprises are approaching now. The future of agentic AI will not be defined by how autonomous AI agents become in isolation, but by how well organizations govern, scale, and integrate them into existing operating models. Early pilots may show strong task completion rates, but long-term value will depend on whether agents can operate predictably, collaborate with humans and other agents, and improve outcomes without introducing new operational risk.

For companies already running agentic AI pilots, the next phase is about harder questions.

- Where should autonomy stop?

- How should agent performance be measured beyond task success?

- How do agents learn without drifting from business intent?

- And how should you prepare for a future where decision-making is shared between humans and machines?

Let's answer these questions.

The maturity curve of agentic AI

Most discussions around agentic AI treat maturity as a question of model capability. In practice, enterprise maturity has very little to do with how intelligent an agent is in isolation. It is defined by how safely, predictably, and economically that agent can operate inside a complex organization.

Across industries, the maturity curve of agentic AI is beginning to take a clear shape.

Stage 1: Task-level autonomy (Experimental)

This is probably where you and most companies are today. Agents are deployed to execute narrow, well-scoped tasks such as generating reports, resolving standard service tickets, triggering replenishment workflows, or performing IT remediation steps. The focus is on proving feasibility rather than durability. Human oversight is constant, guardrails are often manual, and failures are tolerated as part of learning.

Success at this stage is typically measured by task completion and speed. Agents that “work” are celebrated, even if they require frequent intervention or struggle under edge cases.

💡Key takeaway

The risk at this stage is false confidence. Task success in controlled environments does not translate to operational readiness at scale.

Stage 2: Workflow-level agency (Operational)

In this phase, agents move beyond isolated tasks and begin operating across multi-step workflows. They interact with multiple systems, hand off work to other agents or humans, and make conditional decisions based on business context.

This is where agentic AI starts to create real operational leverage, but also where complexity increases sharply. You begin investing in orchestration layers, policy enforcement, logging, and human-in-the-loop controls. Agent behavior is no longer evaluated solely on outcomes, but also on consistency, explainability, and failure recovery.

💡Do you know

Many pilots stall at this stage. Not because agents fail technically, but because organizations underestimate the governance and operational discipline required to run agents continuously.

Gartner predicts that over 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear business value, and risk mismanagement, which directly justifies the insight on operational discipline challenges.

Stage 3: Domain-level autonomy (Scalable)

At this stage, agentic AI operates as a persistent capability within a defined business domain such as procurement, customer service operations, or IT service management. Agents are aware of domain constraints, historical context, and organizational policies. They learn within bounded limits and improve decisions over time.

Human involvement shifts from execution to supervision and exception handling. Performance measurement evolves from task metrics to business outcomes, such as cost reduction, cycle-time improvement, and risk containment.

🔭 Reality check

Very few have reached this stage today. Those that do treat agentic AI less like a tool and more like a digital workforce that requires enablement, governance, and continuous evaluation.

Stage 4: Enterprise-level agency (Transformational)

This is the future state most companies are implicitly aiming for, but rarely articulate clearly. Here, multiple agent systems collaborate across domains, negotiate priorities, and dynamically allocate work in line with organizational goals. Decision-making becomes distributed by design, with humans setting intent, boundaries, and accountability frameworks rather than directing individual actions.

Reaching this stage requires more than better models. It demands new operating models, redesigned accountability structures, and a clear answer to a difficult question: who is responsible when autonomous systems make consequential decisions?

This stage will define the long-term future of agentic AI, but it will emerge slowly, unevenly, and only in organizations willing to rethink how work gets done.

The future of agentic AI over the next 24 months

The future of agentic AI will not unfold as a straight march toward full autonomy. Over the next 24 months, it will be shaped by consolidation, operational friction, and selective progress rather than unchecked acceleration. As more agentic AI pilots move into live environments, you will find that the primary constraints are organizational and operational, not technical.

- Early momentum will slow once agents are exposed to real-world variability. Edge cases will surface at scale. Cross-system dependencies will become visible. Agents that performed well in controlled pilots will struggle when required to operate continuously, recover from failure, and coordinate with humans and other agents. This is the first signal that agentic AI is colliding with enterprise reality.

As a result, the future of agentic AI will briefly move sideways before it moves forward. Businesses will pause aggressive expansion and redirect investment toward orchestration layers, policy enforcement, auditability, and intervention mechanisms. What may look like caution from the outside will be a necessary correction to avoid brittle autonomy that cannot be trusted at scale.

- Another defining shift in the future of agentic AI will be how success is measured. Task completion and speed will no longer be sufficient indicators of value. Businesses will increasingly prioritize predictability, failure containment, escalation quality, and outcome stability. Agents that perform fewer actions but do so consistently, and within defined boundaries, will outperform those optimized for maximum autonomy.

- Human roles will also evolve in parallel. The future of agentic AI is not hands-off automation, but structured human–agent collaboration. Humans will move from executing tasks to supervising behavior, defining intent, and resolving exceptions. Organizations that formalize these roles early will progress faster than those that treat human oversight as a temporary necessity.

💰Two cents from Sprinklr

Over the next two years, this phase will create a clear divide. Some companies will remain in a perpetual state of experimentation, cycling through pilots without lasting impact. Others will narrow focus to a small number of domains where agentic AI can be governed, measured, and embedded into core operations. These decisions, more than any model upgrade, will determine who captures long-term value.

This is the near-term future of agentic AI: slower than the hype suggests, more disciplined than early pilots imply, and far more consequential for how enterprises operate.

Design principles shaping the future of agentic AI

The following principles are already emerging among early adopters and will increasingly define who successfully scales agentic AI.

1. Design for bounded autonomy, not maximum autonomy

In the future of agentic AI, the most valuable systems will not be those that act with the greatest freedom, but those that operate within clearly defined boundaries. Autonomy must be explicitly scoped by domain, authority, and risk tolerance.

Enterprises that treat autonomy as a tunable parameter rather than a binary choice will adapt faster. Agents should know not only what they can do, but when they must stop, escalate, or defer. Bounded autonomy reduces failure blast radius and increases trust, which ultimately enables wider deployment.

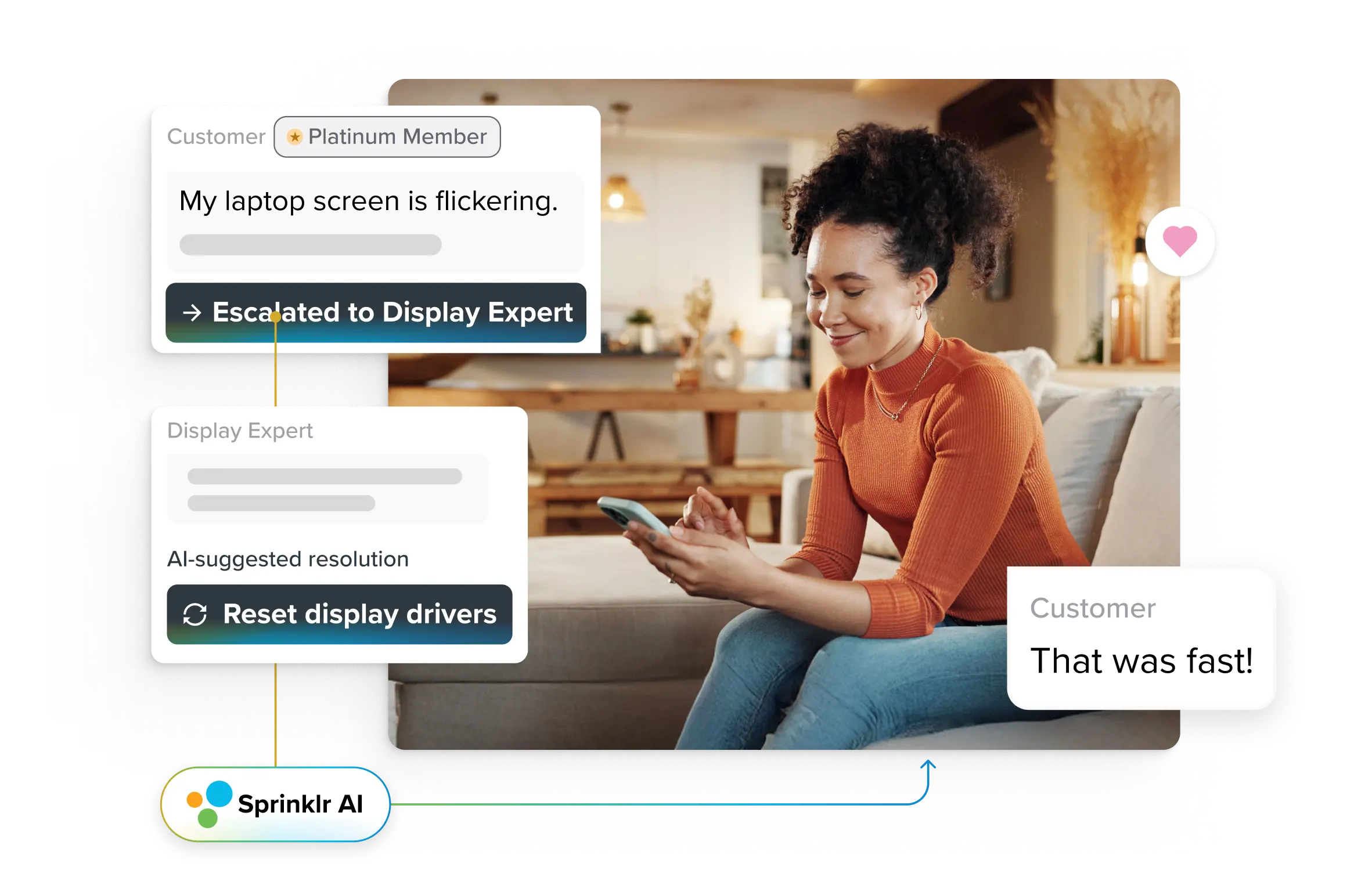

For example, in a contact center, an agentic system may be authorized to diagnose issues, attempt resolution, and trigger predefined remediation steps. However, the moment a case involves regulatory exposure, unusual sentiment patterns, or financial adjustments beyond a threshold, the agent is required to pause and escalate with full context. The agent remains autonomous within routine boundaries, while humans retain control over high-risk decisions.

2. Separate reasoning from execution by design

As agentic AI systems become more embedded, you will learn that reasoning and execution must be decoupled. Agents that decide and act within the same opaque loop are difficult to audit, debug, or govern.

The future of agentic AI favors architectures where intent, planning, and action are distinct layers. This separation allows you to inspect decisions, enforce policy, and intervene without dismantling the entire system. It also creates clearer accountability when outcomes matter.

For example, in an operations workflow, an agent may determine that inventory should be reallocated based on demand signals and supply constraints. That reasoning can be reviewed, simulated, or overridden before execution. The execution layer then applies only approved actions, such as updating ERP records or triggering shipments, under policy controls. If an error occurs, teams can correct the decision logic without disrupting downstream execution.

3. Make governance a first-class system capability

Governance cannot be retrofitted onto agentic systems once they are live. In the future of agentic AI, governance will be built in from the start, embedded into how agents are authorized, monitored, and evaluated. This includes policy enforcement, logging, explainability, and native system escalation paths rather than external controls. If you treat governance as infrastructure, not process, you will scale faster with less risk.

4. Measure agents by stability, not activity

Traditional automation metrics reward volume and speed. Agentic AI breaks that model. In the future of agentic AI, performance will be measured by predictability, recovery behavior, and outcome stability over time.

An agent that completes fewer tasks but behaves consistently under stress is more valuable than one that maximizes throughput at the cost of volatility. Businesses that redesign KPIs around stability and business impact will avoid the trap of noisy autonomy.

For example, in a customer service environment, an agent that resolves slightly fewer cases but maintains consistent resolution quality during demand spikes, degrades gracefully under uncertainty, and escalates edge cases early will outperform a high-throughput agent that oscillates between success and failure. Stability reduces rework, protects customer trust, and lowers downstream operational costs.

5. Redesign human roles, not just workflows

The future of agentic AI is inseparable from the future of human work. As agents take on execution, human roles must shift toward supervision, intent definition, and exception handling. If you fail to formalize these roles, you will experience friction, mistrust, and shadow intervention. Those that explicitly design human–agent collaboration models will unlock higher leverage and clearer accountability.

6. Scale selectively before scaling broadly

One of the most important design principles shaping the future of agentic AI is restraint. Enterprises that attempt to deploy agents across every function too quickly dilute focus and amplify operational risk. The more effective path is selective scaling: choosing a small number of domains where autonomy creates durable advantage, governance is feasible, and outcomes are measurable.

For example, you may deploy agentic AI deeply within customer service to own case resolution end to end, while keeping marketing, finance, and HR in assisted or semi-automated modes. This allows you to harden governance, refine escalation models, and prove outcome impact in one domain before expanding. Depth in a single function builds institutional confidence that broad, shallow deployments never achieve.

How agentic AI will transform key business functions by 2030

By 2030, the future of agentic AI will be defined by systems that own outcomes, not tools that assist tasks. Agentic setups will convert isolated productivity gains into durable business impact by executing multi-step work across systems under defined guardrails. The shift will not be uniform across functions, but the pattern will be consistent: humans set intent and boundaries; agents execute and adapt.

Business Function | Today | With Agentic AI by 2030 |

| | |

| | |

| | |

| | |

| | |

What agentic AI leadership will look like in three years from now

In three years, leadership in the future of agentic AI will not be defined by how many agents you’ve deployed or how autonomous they appear in demos. It will be defined by how deliberately autonomy is designed, governed, and held accountable inside the organization.

Agentic AI leaders will distinguish themselves in three visible ways.

📌 First, they will treat autonomy as a managed asset. Rather than pushing agents to act more independently, they will focus on where autonomy creates a durable advantage and where it introduces unacceptable risk. Leaders will invest in systems that allow autonomy to expand and contract based on context, confidence, and performance, not ambition.

📌 Second, they will institutionalize accountability. In the future of agentic AI, responsibility will not be abstract or shared vaguely between humans and machines. Enterprises at the front of the curve will define who owns agent behavior, who reviews decisions, and how failures are escalated and corrected. This clarity will enable trust, both internally and with regulators, customers, and partners.

📌 Third, they will redesign leadership itself. Executives will shift from managing execution to managing intent. Instead of directing how work gets done, they will focus on defining the goals, constraints, and trade-offs within which agentic systems operate. The ability to set clear intent, design boundaries, and evaluate outcomes will become a core leadership skill.

Enterprises that lag will show a different pattern. They will accumulate disconnected agents, fragile workflows, and manual overrides that undermine confidence. Autonomy will remain superficial, constrained not by technology but by the organization’s inability to trust its own systems.

The future of agentic AI will not belong to the most aggressive adopters. It will belong to them that learn how to govern autonomy without suffocating it, and how to scale intelligence without losing control. That is what agentic AI leadership will look like. Quietly operational, deeply intentional, and structurally embedded into how businesses run.

To know what everything Sprinklr has built to keep you future-proof, schedule a session with our experts today!

Frequently Asked Questions

Customer service leaders should measure adaptive AI success beyond automation rates. Focus on outcome metrics such as first-contact resolution, average handle time stability, backlog reduction, and escalation quality. Track how often AI resolves issues end-to-end without human rework, how predictably it behaves under volume spikes, and whether customer satisfaction improves over time. Reliable performance and controlled failure matter more than raw deflection numbers.

Implementing agentic AI in a contact center shifts costs rather than simply reducing them. While it lowers long-term labor and rework costs, it introduces upfront investment in orchestration, governance, integration, and monitoring. Costs rise initially as systems are hardened for reliability, but mature deployments reduce handling time, backlog growth, and operational volatility over time.

Adaptive AI integrates with existing CRM and support platforms through APIs, event triggers, and policy layers rather than replacing them. It reads customer context, takes actions across systems, and writes outcomes back into the CRM. Over time, it coordinates workflows, enforces rules, and escalates exceptions, while existing platforms remain the system of record.

Yes. Adaptive AI improves employee satisfaction by removing repetitive, low-value work and reducing constant firefighting. Agents handle routine cases and prepare context for complex issues, allowing humans to focus on judgment-heavy interactions. For customers, this translates into faster resolutions, fewer handoffs, and more consistent experiences across channels.

Data privacy and compliance are ensured by designing adaptive AI with strict access controls, policy enforcement, and auditability from the start. Customer data usage is scoped, logged, and monitored continuously. Learning is bounded to approved datasets and behaviors, with human review for sensitive actions, ensuring adaptation never bypasses regulatory or organizational controls.