Transform CX with AI at the core of every interaction

Unify fragmented interactions across 30+ voice, social and digital channels with an AI-native customer experience platform. Deliver consistent, extraordinary brand experiences at scale.

Agentic AI in Customer Experience: The Upgrade You Need

Key takeaways

- Agentic AI changes CX when it finishes the task, not when it simply flags, drafts, or routes.

- The real barrier is not model capability. It is whether your processes, exceptions, and decision logic are clear enough for a system to act on safely.

- The best early use cases are high-volume, low-risk jobs with clear rules, like order updates, billing fixes, and guided troubleshooting.

- Strong agentic CX depends on a solid data layer: identity, live signals, journey context, consent, and permission controls.

- Over the next year, leading teams will treat AI less like an assistant and more like a supervised system of action across the customer journey.

Most of what you've built still needs you. The automation flags an issue—then waits. The chatbot qualifies intent—then routes. Something always lands back on a person's desk for the actual decision.

That's the problem nobody talks about. Not capability. Completion.

Agentic AI in customer experience changes this by simply finishing things. Acting on context. Closing loops that currently stall out in queues and handoffs.

This piece walks through what agentic AI in CX looks like in onboarding, support, loyalty, and personalization as operational changes you'd actually feel in your workflow.

- What does “agentic AI in customer experience” really mean today?

- Where agentic AI delivers value across the CX journey

- High-impact use cases of agentic AI in customer experience

- The data layer behind agentic AI in customer experience

- How leading brands are already using agentic AI in CX

- Agentic AI in customer experience: The next 12 months

What does “agentic AI in customer experience” really mean today?

The word "agent" gets thrown around loosely. A chatbot with a personality isn't an agent. An LLM drafting replies for your team isn't either. The distinction matters because it changes what you're actually buying—and what you're accountable for when it breaks.

Here's what separates the layers:

Chatbots | LLM Copilots | Agentic AI in CX | |

Core function | Matches intent to scripted flows | Generates contextual responses | Perceives, decides, executes |

Who acts? | Human (after handoff) | Human (after review) | The system itself |

System access | Read-only or none | Read-only, suggests actions | Read-write across tools |

Breaks when... | Customer goes off-script | Human bottleneck returns | Guardrails are poorly scoped |

Best for | High-volume deflection | Speeding up agent work | End-to-end task resolution |

The table looks clean. Reality isn't. Most CX orgs sitting at the copilot layer assume scaling to agentic is a deployment decision. It's not. It's a legibility problem.

McKinsey research found that nearly 70% of contact center workers say at least a quarter of what they do daily isn't documented anywhere. It's passed down like folklore—workarounds, judgment calls, soft exceptions. Your LLM can draft a response because language is pattern-matchable. An agentic system can't act on a process that's never been made explicit.

So what does the drop-off loop actually require?

A customer stalls mid-onboarding. For the system to intervene, it needs to know what "stalled" means in your context. Which steps are skippable? Which alternate paths exist? Whether shortcutting now creates friction later. That logic lives in your team's heads—not your workflow tool.

The gap between "AI that acts" and "AI that acts well" is the distance between documented process and tribal knowledge. Until that's closed, you're scaling risk alongside capability.

We want agentic AI in customer experience to act when it sees a drop-off, not just report it. What would that loop look like?

A drop-off loop only works when insight turns into motion fast enough to stay relevant. The agent first recognizes hesitation as it happens, reading pauses, retries, and stalled progress as uncertainty rather than exit. It then interprets that signal in context, factoring journey step and recent intent to gauge whether intervention makes sense. Decision calibration follows, where the agent chooses how visible the response should be, or whether restraint protects the experience. Execution happens immediately through a small, targeted action that removes friction without disruption. The loop closes when the agent validates what happened next and adjusts future behavior based on the outcome, not the assumption.

Where agentic AI delivers value across the CX journey

The gap between AI-assisted and agentic shows up in execution. In the moments where someone either gets stuck or moves forward. Where repetition disappears. Where anticipation replaces reaction.

Let’s ground this.

1. Onboarding journeys

Most onboarding flows are designed for the ideal path—everything filled in correctly, every document ready, every step completed in sequence.

Real customers don't work that way.

They get interrupted. They don't have their ID handy. They fat-finger their email address. They pause on a step because the wording is confusing, and then they leave.

Agentic AI changes this by doing the work the customer shouldn't have to do.

If someone's autofill only catches half their address, the agent can pull up enrichment data and complete the rest—no extra clicks. Missing documents may trigger specific guidance from the agent and even when customers get stuck anywhere, the agent comes to the rescue with contextual help.

So instead of waiting for failure, the system predicts where friction concentrates and removes it early.

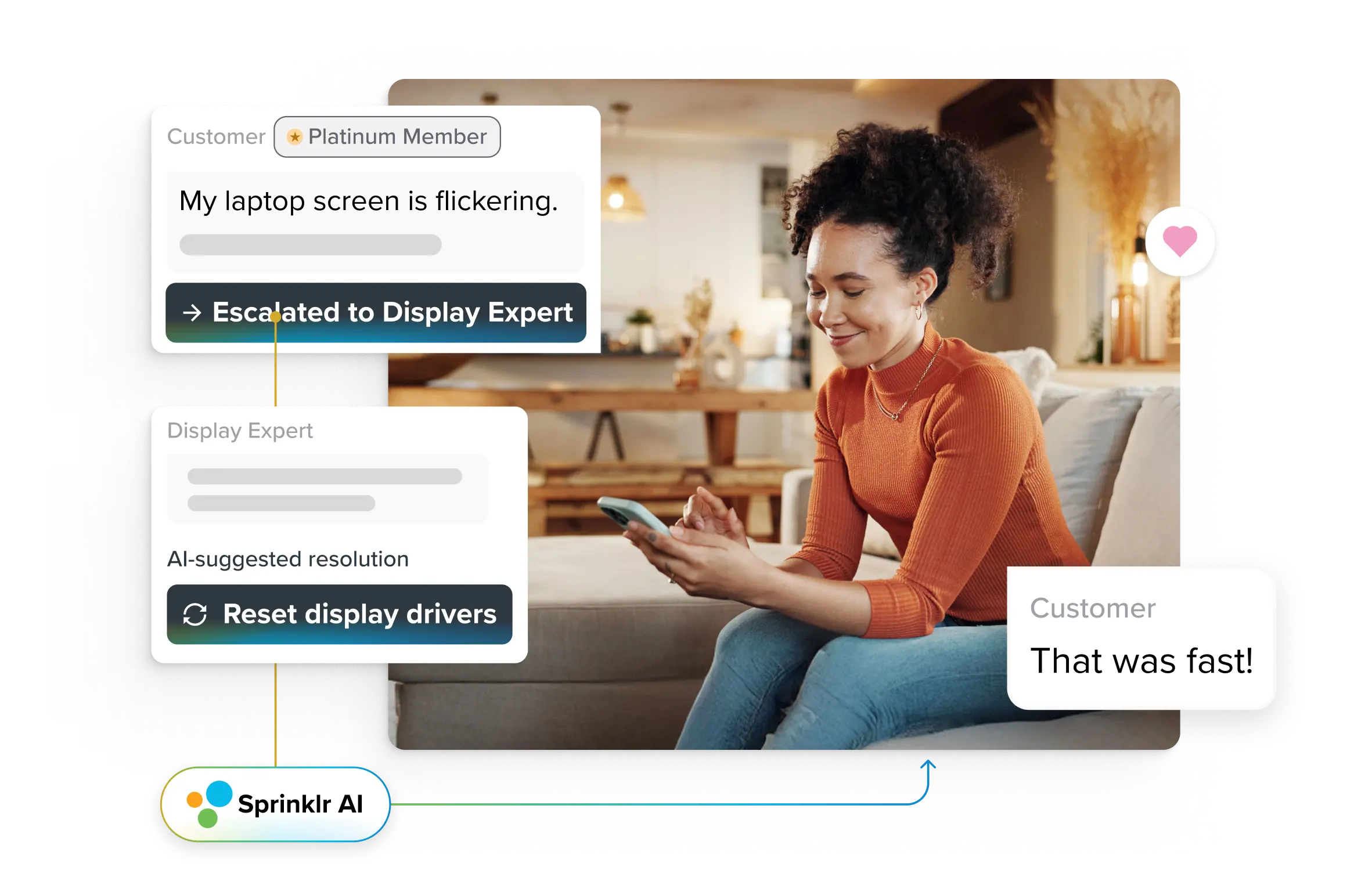

2. Support and issue resolution

This is where most teams start with agentic AI—and for good reason. The ROI is tangible. The volume is high. The pain is obvious.

But you don't want your chatbot to deflect tickets. That framing misses the point.

The goal is to close the loop faster for simple things and make complex things easier to resolve when humans do step in.

A duplicate charge email arrives. Traditionally, it queues, gets reviewed, refunded days later. With agentic logic, the system validates the charge instantly and auto-refunds within policy thresholds. Confirmation arrives in minutes.

Someone says, "It's not working."

That phrase is the bane of every support team. It means nothing and everything at once.

Traditionally, this kicks off a long thread of clarifying questions. What device? What browser? What were you trying to do? Screenshots? Eventually, it gets escalated to Tier 2.

Agentic AI in CX doesn't start from zero. Before the first response goes out, the agent pulls session data and device telemetry. It knows what the customer was doing when things broke. It sees the error state.

Sometimes that's enough to push a fix automatically. Sometimes it's not—but when the issue does escalate, the next human in the chain inherits full context.

This is authoritative design in practice. Fast when safe. Structured where risky.

3. Proactive experience optimization

Everything above is still reactive—something happens, the system responds. The real leverage comes when agents act before a problem becomes a ticket.

This requires detection, judgment, and action.

- Detection means watching for signals that something is going sideways. Someone abandons checkout for the second time this month. Someone rage-clicks through a flow that should be simple. Someone goes silent after showing high intent.

- Judgment means knowing what to do about it. Not every signal deserves a response. Some people just changed their minds. Others got distracted. The agent has to learn which patterns warrant intervention—and what kind.

- Action means actually doing something. Not flagging it for a human to follow up in three days. Sending the message. Applying the small incentive. Triggering the help prompt. Closing the loop.

Here's a real example.

Someone adds an item to their cart, starts checkout, and drops off at the payment step. This is the second time it's happened.

The agent looks at the account and sees they have a stored payment method—but it expired last month. That's probably the issue.

Two hours later, they get a text: "Looks like checkout didn't go through—want to update your payment info?" with a link that takes them straight to the right screen.

If they update and complete the purchase, done. If they don't, a human gets looped in after a couple of days.

Loyalty and retention

You can't run retention as a program once a quarter. It's a series of small moments where you either reinforce the relationship or let it drift.

Most retention spend goes toward customers who have already one foot out the door. They've called to cancel, or they've stopped using the product entirely. By then, you're negotiating when they aren't too keen on staying anyway.

Agentic AI shifts this upstream. Way upstream.

- If someone's usage starts dropping, the agent can notice and instead of a generic "we miss you" email, it can send something tied to what they used to do. "You haven't checked your reports in a couple of weeks. Here's what's changed since you were last in."

- If someone's payment fails, the agent can start a dunning sequence that escalates gradually—friendly at first, more urgent over time, before the account lapses.

You are more likely to retain customers when you catch these early signals. It is small acts of attentiveness, scaled. And they compound.

PII Boundaries

Access is routine. Autonomous action is where risk concentrates.

Reversible, low-stakes actions may execute independently. Identity changes, payment updates, and legal records require confirmation or human oversight.

This is the trust architecture. Customers tolerate automation when control remains visible. Break that boundary once, and recovery costs more than any saved ticket. Learn about Sprinklr's commitment to responsible AI.

High-impact use cases of agentic AI in customer experience

This is where agentic systems stop being theoretical. Each use case hinges on a single idea: the agent acts completely autonomously, within defined bounds.

1. Autonomous order updates

Agent action: Detects order state changes, predicts delays, and pushes proactive updates across the right channel.

Result: Fewer “where is my order” contacts, lower inbound volume.

Example: Shipment delay detected → agent sends revised ETA + explanation before the customer asks.

2. Intelligent recovery after negative sentiment

Agent action: Monitors sentiment drift across interactions and triggers recovery before churn intent surfaces.

Result: Higher recovery rates, reduced escalations.

Example: Frustrated tone in chat + prior ticket history → agent initiates follow-up with context-aware apology and fix.

3. Account-level personalization

Agent action: Applies personalization based on account behavior, not just user profile fields.

Result: Better engagement without over-targeting.

Example: Power user routed to advanced help paths; casual user gets simplified guidance.

4. Subscription management

Agent action: Manages renewals, downgrades, pauses, and dunning flows within policy limits.

Result: Lower involuntary churn, fewer billing tickets.

Example: Payment failure detected → agent retries, notifies, and escalates gradually before lapse.

5. Effort score reduction interventions

Agent action: Detects repeated friction and removes steps instead of adding explanations.

Result: Reduced customer effort scores, shorter resolution paths.

Example: Same issue logged twice → agent bypasses Tier 1 and routes directly with context.

6. CX journey orchestration

Agent action: Coordinates actions across onboarding, support, and retention moments as one continuous flow.

Result: Fewer disjointed experiences across channels.

Example: Onboarding friction leads to proactive support, not a standalone ticket later.

7. Social care triage

Agent action: Classifies social posts by urgency, influence, and risk, then acts or routes accordingly.

Result: Faster response on high-impact issues, lower brand risk.

Example: Viral complaint detected → agent escalates instantly with full context attached.

8. Identity verification + routing

Agent action: Verifies identity signals silently and routes cases without redundant authentication.

Result: Shorter handle times, less customer frustration.

Example: Authenticated app user routed past verification to account-specific support.

9. Complaint clustering + action triggers

Agent action: Groups similar complaints and triggers corrective action, not just closures.

Result: Fewer repeat issues, visible systemic fixes.

Example: Spike in checkout complaints → agent flags root cause and initiates customer service workflow to fix it.

10. CX insights → configured fixes

Agent action: Converts insight patterns into executable rules and workflows.

Result: Faster learning loops, less insight decay.

Example: Insight shows abandonment at one step → agent deploys targeted intervention automatically.

The data layer behind agentic AI in customer experience

Agentic AI lives or dies on the quality of the data on which decisions are made. The agent has to recognize the customer, read the moment, understand intent, and then act in a way that holds up across channels. If any of those inputs wobble, the system either freezes, overreaches, or escalates everything to humans.

The data layer that supports strong execution of agentic AI in CX rests on a few non-negotiable pillars:

1. Identity stitching

The system needs a working identity graph that connects logins, devices, cookies, email addresses, phone numbers, and case history into one operational view. Precision helps, but confidence thresholds matter more. The agent should know when identity certainty is strong enough to act and when ambiguity requires verification or routing.

2. Real-time signals

Customer experience unfolds in motion. Session events, error states, retries, click loops, order scans, and sentiment shifts must arrive fast enough to reflect current reality. Interventions built on stale signals feel misplaced, even when the logic appears sound.

3. Customer intent vectors

Intent should move, not sit still. The system needs a continuously updated directionality model that reflects buying momentum, confusion, comparison behavior, customer frustration, or exit risk. Decision intensity flows from this vector. It guides the agent on whether to nudge, fix, escalate, or stay quiet.

4. Journey step metadata

Behavior only makes sense in context. A pause in checkout signals friction. A pause in account settings may signal caution. Step-level metadata anchors signals to their place in the journey, allowing the agent to interpret actions correctly and avoid blanket interventions.

- Preferences and consent status

Channel preferences, frequency limits, opt-outs, and regional consent requirements must be machine-readable and enforced at decision time. Agentic systems move across touchpoints quickly. Governance must move with equal precision.

- PII masking logic

Sensitive fields require structured visibility controls. The agent should access the minimum viable data needed for the task, with redaction by default and authorization gating for sensitive actions. Permission rules must attach to execution authority, not live in static policy documents.

When these pillars hold, the agent operates with confidence and restraint.

If these data aren’t clean, your agent will fail

- Identity resolution produces duplicates or uncertain merges without confidence scoring

- Event streams arrive late or lack session continuity

- Intent gets labeled once and never updated

- Journey steps are disconnected across systems

- Consent rules rely on manual enforcement

- Sensitive data flows without action-level permission controls

💡 Which jobs-to-be-done are the best first targets?

The strongest early targets share clear completion criteria, high frequency, and bounded execution risk. Order status clarity, low-threshold billing corrections, guided troubleshooting tied to telemetry, and early friction recovery fit this profile. Each allows measurable impact while keeping authority limits tight.

Jobs that involve irreversible account changes, identity proofing, legal disputes, or high-value refunds belong later, once the data layer has proven it can support decision-grade execution under pressure.

How leading brands are already using agentic AI in CX

Agentic AI in customer experience has already moved past pilots and proofs. It now lives inside real contact centers, listening to every interaction, deciding what matters, and steering action. Not by replacing agents, but by seeing patterns humans cannot and turning them into timely nudges, insights, and next-best moves.

For example, Enter Connect Center in Peru was handling 600,000+ inbound calls a month, yet fewer than 1 percent were reviewed. Sales intent often passed unnoticed, and coaching relied on intuition rather than evidence.

They chose to implement Agent2Sales, which helped them automatically analyze every call, detect buying signals and missed moments, and feed daily, personalized insights to agents and supervisors. The system also surfaces real-time sentiment and product signals for downstream teams.

In just 10 weeks, inbound service sales grew 40 percent. Agents doubled offer rates during service calls. NPS held steady and improved slightly (40.3% → 41.2%), showing growth without experience erosion.

Agentic AI doesn’t wait to be asked. It listens, decides, and guides. That shift, from assistance to agency, is already reworking how customer experience runs day to day.

Agentic AI in customer experience: The next 12 months

In 2026 and beyond, agentic AI in CX will be judged less by how human it sounds and more by whether it can carry responsibility through a messy service moment. The real shift will be from answer generation to accountable execution. A customer reports a failed delivery; the agent checks order status, reads policy, weighs loyalty tier and risk, opens a case, offers the allowed remedy, records why it acted, and hands off when confidence drops. That chain is where the future sits.

The winners will not be the teams that have the widest agentic implementation. They will be the teams that teach the ones they have how the business runs: which actions need approval, which promises create legal risk, which customers need urgency, and which fixes cost less than another apology.

CX leaders will spend 2026 cleaning knowledge, permissions, workflows, QA, and audit trails because autonomy breaks fast when the basics are brittle.

The most interesting change will be invisible to customers. They will not “meet” agentic AI. They will feel a brand that remembers, acts, explains, and recovers without hiccups.

CX leaders will manage agents like teams, with defined roles, permissions, playbooks, and QA loops.

That is where platforms built around structured agent roles and guardrails start to matter. If your stack supports multiple supervised agents acting across channels rather than a single chatbot bolted onto one surface, you are positioned to move from pilot to execution.

Sprinklr AI Agents fit that direction: role-based, policy-aware, and designed to act across the full CX workflow without sacrificing control. Wanna take a look?

Frequently Asked Questions

Conversational AI can respond, but it cannot resolve. Brands are shifting because CX pressure now sits around speed, follow-through, and accountability. Agentic AI closes loops: it decides, acts across systems, and fixes issues instead of handing customers another conversation.

Agentic AI can manage order updates, refunds within policy, proactive delay notifications, subscription changes, identity checks, complaint clustering, and journey-level interventions. These tasks work well when rules are clear, data is reliable, and outcomes are reversible without customer harm.

Teams need strong process clarity, clean policy definitions, journey ownership, and basic data literacy. The hard skill is not prompt writing, but operational thinking: defining what decisions are allowed, when escalation is required, and how success or failure is measured.

Yes, if autonomy is bounded. Control comes from policy layers, permissioning, audit trails, and human-in-the-loop checkpoints for high-risk actions. Well-designed agentic systems increase control by making decisions explicit and traceable, rather than buried inside manual work.

Trust builds when agents are introduced gradually, outcomes are visible, and failures are explainable. Start with low-risk actions, review decisions regularly, evolve policies, and treat agents like junior operators that are coached, measured, and corrected, not black boxes left alone.