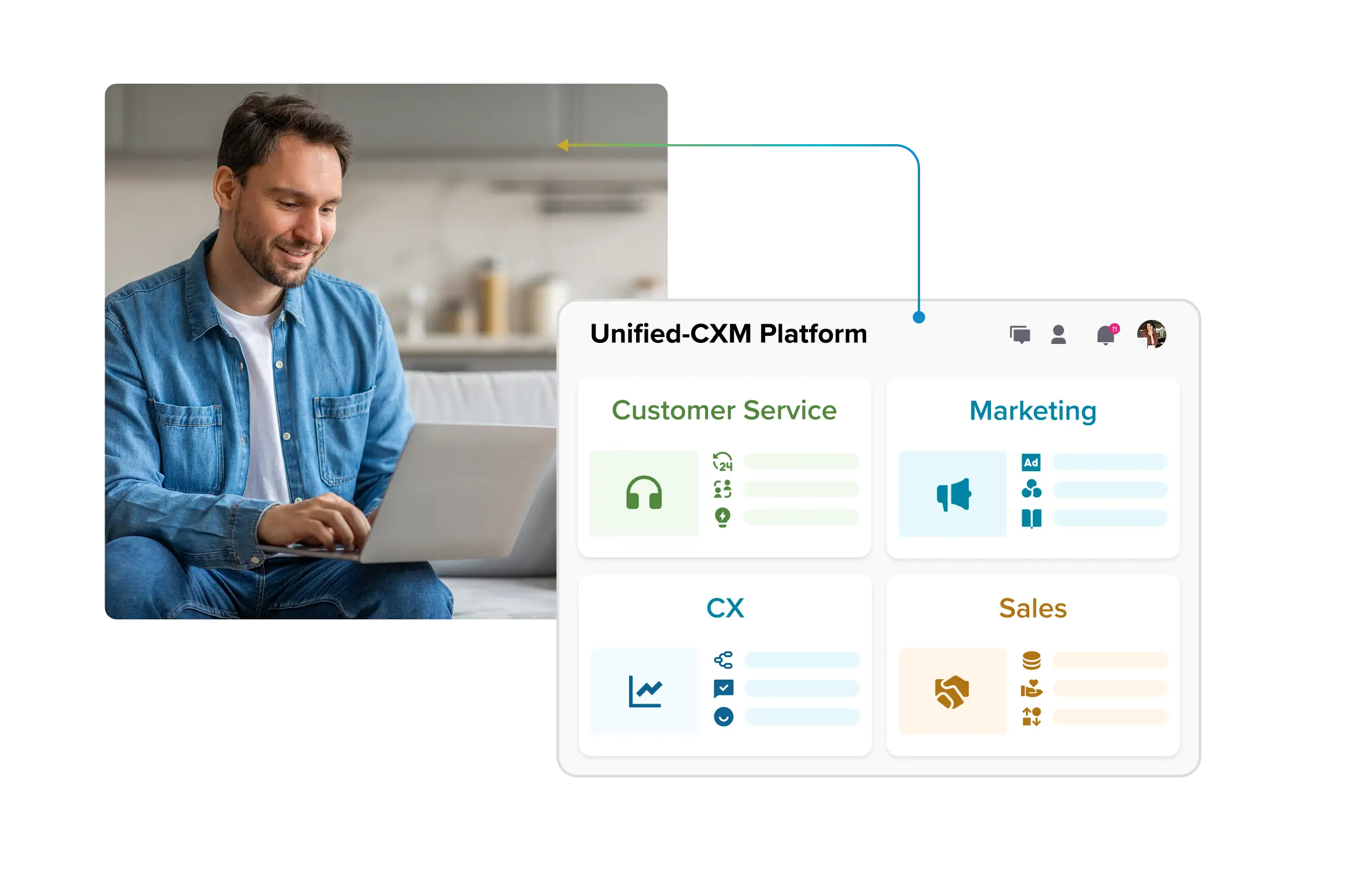

The strategic AI-native platform for customer experience management

Unify your customer-facing functions — from marketing and sales to customer experience and service — on a customizable, scalable, and fully extensible AI-native platform.

Read more about Sprinklr’s continued commitment to responsible AI

With widespread adoption and penetration across industries, regions and use cases, Generative AI is here to stay. From marketing to healthcare, consumers from all walks of life have embraced the technology to scale their operations and cut costs. However, the technology has developed faster than the regulatory landscape around it, trust and security issues like data breaches, copyright violations, unregulated bias and deepfakes. – leading to a blanket ban on its usage by many countries and enterprises.

In such a scenario, it becomes vital for enterprises to team up with technology partners that have an unwavering focus on security and governance.

At Sprinklr, we understand the need for responsible AI development despite the relatively nascent legal framework around the technology. With our core pillars of trust, integrity and partnership, we believe that a responsible approach should benefit the society, promote ethical use, and minimize adverse impact and risk.

AI is in Sprinklr’s DNA

AI is integral to our business model at Sprinklr – our unique CXM platform bundles 50+ AI models, which enhance the digital experience for our customers and streamline and improve their customer engagement and interaction.

Apart from leading the AI-powered CXM space, Sprinklr lives and breathes the idea of enabling every organization on the planet to make its customers happier. “The Sprinklr Way” is a set of unifying values and beliefs that are embodied by our people and products. We put humans first – and we lead with ethics and empathy, forging a human connection that makes us different, not just better.

With next-generation products like sentiment analysis and social listening, Sprinklr provides unique customer insights and delivers seamless, personalized experiences to its users.

Sprinklr is purpose-built for compliance and governance

Our AI commitments are fortified with enterprise-grade unified governance that is underpinned by three core pillars:

- We foster trust

When developing new AI capabilities, we do a thorough impact assessment to rule out bias, encourage fairness and mitigate trust violations proactively. The aim is to understand the interplay between AI and our product offerings, from development through deployment, and reduce risk (if any) to tolerable levels. - We believe in integrity

Protection and security of our customers' data is of paramount importance at Sprinklr. Our AI models are trained on vast amounts of big data to drive accuracy. We use open-source and public data sets, mask PII and encrypt data in transit and at rest, wherever feasible. We regularly conduct risk assessment on codes and infrastructure to detect potential security vulnerabilities. - We nurture partnerships

At Sprinklr, we strive to continuously improve and enhance our customers’ bespoke AI models to help accomplish desired outcomes and drive best-in-class performance. All of our products are crafted, tested and monitored by humans. We have a 200+-strong team to natively annotate all models and drive authentic outcomes. Furthermore, customer feedback mechanism is built into the system, and feedback is analyzed and incorporated in product refinement exercises.

Further, all Sprinklr AI models have to pass through a seven-step QA process prior to deployment. Sprinklr is a 100% SaaS solution and customer data is strictly hosted and stored on the cloud. All data in transit is encrypted via TLS 1.2 and all data at rest is encrypted using AES - 256.

Learn more: Sprinklr and GDPR: What You Need to Know

Sprinklr’s Responsible AI – Technology to serve people

We, at Sprinklr, believe technology can complement humans, but never replace them. Human involvement is imperative when it comes to applying cutting-edge tools like generative AI. To drive diligence and build a culture of accountability, various processes, policies and explainers are put into place to check for data and model bias, and bias mitigation. To learn more about our compliance and trust initiatives, check out our Trust Center.