Transform CX with AI at the core of every interaction

Unify fragmented interactions across 30+ voice, social and digital channels with an AI-native customer experience platform. Deliver consistent, extraordinary brand experiences at scale.

Conversational Agents: The New Frontline of CX

Key takeaways

- Conversational agents now function as workflow systems that interpret intent across turns, maintain context and carry interactions forward until a task reaches completion.

- Modern agents rely on a layered architecture to stay reliable in production.

Language understanding, policy control, tool access, memory and guardrails work together to reach structured outcomes. - The shift to agentic behavior changes the unit of work from replies to outcomes. Agents plan steps, execute actions across systems and close loops without forcing users to restate intent at every stage.

- Effectiveness depends less on intelligence and more on control, clarity and continuity. Strong agents guide progress, recover from errors, adapt tone and escalate when needed while keeping the interaction coherent end to end.

Today’s conversational agents are being designed as working surfaces where reasoning, memory, and action converge. They don’t merely respond. They interpret intent across turns, decide what step comes next, and coordinate real work across systems to lead conversations to completion.

This shift matters because it changes how software is built and how people interact with it. Conversations are no longer wrappers around workflows. They are the workflow. This guide unpacks how modern conversational agents function, why they are becoming central to enterprise design, and what separates surface-level chat from systems that can carry work end to end.

- What are conversational agents and why is the term misunderstood?

- How modern conversational agents actually work

- The strategic leap: From conversational agents to agentic behavior

- 6 conversational agent examples that deliver real business outcomes

- What makes a conversational agent effective?

- What conversational agents still can’t do

- The future of conversational agents: What’s coming in 2026 and beyond

What are conversational agents and why is the term misunderstood?

The term conversational agent sounds simple, but it hides a lot of complexity. At its core, a conversational agent is a system designed to interact with people through natural language while keeping track of context across an exchange. That part is familiar. What often gets missed is what modern conversational agents have evolved to actually take actions to solve queries once the conversation begins.

They are designed as interaction-driven systems, where language becomes the entry point into logic, decisions, and actions. They interpret intent over multiple turns, remember what has already happened, and move interactions toward a concrete outcome.

This is why the term is frequently misunderstood.

Many still associate conversational agents with scripted bots or narrow assistants. In practice, modern conversational agents operate much closer to workflows than dialogs. A conversation can lead to creating a case, checking eligibility, guiding troubleshooting, or helping a user discover the right product. The exchange evolves as new information appears, rather than restarting with every message.

A helpful way to frame the shift is this: chat as an interface versus conversation as a workflow.

Chat as an interface focuses on replying. Conversation as a workflow focuses on progress. The first answers questions. The second carries work forward.

The words matter because they signal a deeper architectural shift.

To make that distinction clearer, here is how different systems typically compare:

Category | What it fundamentally represents | How interaction works | Typical scope |

Chatbots | Rule-driven responders | React to keywords or fixed paths | FAQs, basic queries |

Virtual assistants | Command-based helpers | Handle short, structured requests | Search, reminders, simple tasks |

Conversational agents | Workflow-aware interaction systems | Maintain context and guide multi-step tasks | Support, onboarding, troubleshooting, discovery |

Agentic AI | Goal-driven systems with decision logic | Plan, act, and close loops across systems | End-to-end task ownership |

Seen this way, conversational agents sit at an important transition point. They are no longer just interfaces that talk back. They are systems that carry intent through a sequence of actions, shaping how work actually gets done through conversation.

Where should conversational agents sit across app, web, and messaging so the journey feels seamless?

Conversational agents should live wherever users already start and continue their work, not behind a single entry point. That means inside apps, on the web, and across messaging channels, all connected to the same underlying context. The experience should feel continuous even when the surface changes. A question started in chat should carry forward inside a product screen or support flow without resetting intent. When context follows the user journey rather than being stalled at a channel boundary, conversations feel natural, consistent, and easy to continue.

How modern conversational agents actually work

Think of a conversational agent as a small system made of cooperating parts. Each part has a clear job, and each one hands work to the next. When they are designed to flow together, the experience feels natural. When they are not, conversations break, stall, or spiral. Below is how these layers fit together, step by step, without treating them as isolated components.

1. Language core: where meaning first takes shape

Everything begins with language. This layer interprets what a person says, even when the message is incomplete, indirect, or loosely phrased. It helps the system understand intent, tone, and direction. The language core does not decide what action to take on its own. Its role is to translate human input into a structured understanding that the rest of the system can work with. Think of it as the listener that turns conversation into something actionable.

2. Policy layer: shaping behavior and boundaries

Once meaning is understood, it passes through a policy layer. This layer defines how the agent should behave in different situations. It carries brand voice, compliance rules, and internal constraints. It decides what kinds of responses are acceptable and which actions are off-limits. This layer ensures consistency and safety, especially when conversations touch sensitive topics or regulated processes. It quietly shapes every response before anything moves forward.

3. Tool access: turning intent into action

With intent clarified and boundaries applied, the agent may need to act. This is where tool access comes in. Tools represent real capabilities such as checking an order, creating a case, searching records, or updating a system. The conversational agent does not “know” these systems directly. Instead, it calls them in structured ways. This separation keeps actions predictable and controlled while allowing conversations to produce real outcomes.

4. Memory and context: keeping the thread intact

As actions happen, context must travel with them. Memory allows the agent to retain what has already been said, what steps are complete, and what still needs attention. It prevents repetitive questions and helps the system adapt as the conversation evolves. Context also allows the agent to connect earlier signals with later ones, so interactions feel continuous rather than reset with every turn.

5. Guardrails and escalation: knowing when to slow down

Every system needs limits. Guardrails define when the agent should pause, ask for clarification, or involve a human. They handle uncertainty, edge cases, and high-risk situations. Escalation logic ensures that responsibility shifts at the right moment instead of forcing automation where judgment is required. This layer protects users, teams, and outcomes while keeping conversations steady under pressure.

Together, these layers form a single flow rather than separate parts. Language feeds policy. Policy shapes tool use. Tools depend on memory. Memory works within guardrails. When designed as a whole, conversational agents move from simple message handling to structured, dependable interaction that can carry real work forward without losing control.

What’s the minimal architecture required to run conversational agents with tool use, memory, and guardrails in production?

A minimal production setup needs five pieces working together.

- Start with an LLM for language understanding and response generation.

- Add an orchestration layer that manages turns, decides when to call tools, and tracks state.

- Connect a tool layer for approved actions like search or CRM updates.

- Include a memory store for conversation state and key user context.

- Finally, add guardrails and escalation logic for approvals, sensitive cases, and handoff to humans when confidence is low.

The strategic leap: From conversational agents to agentic behavior

This is where conversational systems stop treating dialogue as the unit of work and start treating outcomes as the unit of work.

Traditional conversational AI agents wait for the next message. Even when they feel fluid, their role is still reactive. Agentic behavior changes that posture entirely. The system begins to own progress. It does not just respond to what was said last. It reasons about what still needs to happen, what outcome is being pursued, and how to move closer to it.

The shift starts with independent task planning. Instead of treating each user message as a standalone input, the agent forms an internal plan. It breaks a goal into steps, decides an order, and keeps track of what has already been completed. This planning layer allows conversations to stretch across time without losing direction.

From there comes multi-step execution. Real work rarely happens in one move. An agent may need to verify information, retrieve records, trigger actions, and check results before moving forward. Each step depends on the outcome of the previous one. The agent coordinates this sequence without requiring the user to restate intent at every turn.

Decision-making sits at the center of it all. Agentic systems evaluate conditions, weigh options, and choose paths based on context rather than rigid flows. They can decide when enough information has been gathered, when risk is too high, or when human input is required. This makes behavior adaptive instead of scripted.

As maturity increases, agents begin to operate across channels rather than inside a single conversation surface. A request might start in chat, continue through email or notifications, and resolve inside a backend system. The user experiences continuity, not channel hopping.

Finally, agentic behavior closes the loop. It summarizes what happened, logs outcomes, updates records, and confirms completion. Work does not just happen; it gets wrapped up cleanly.

Consider a support scenario. A user reports an issue. The agent investigates, creates a case, sends status updates, and closes the ticket once resolved. Or take refunds: eligibility is checked, the refund is issued, and the customer is notified. These are not isolated replies. They are coordinated sequences driven by intent.

This is the strategic leap. Conversational agents stop acting like interfaces and start acting like systems that carry responsibility from start to finish.

Read More: Here's How Agentic AI and Conversational AI Differ

6 conversational agent examples that deliver real business outcomes

The difference between a conversational interface and a truly capable conversational agent becomes visible the moment work starts unfolding over time. Not in a single reply. Not in a clean prompt. But in situations where intent shifts, information arrives late, systems disagree, and outcomes still need to be closed.

This is where agentic behavior begins to surface.

Below are real patterns where conversational agents move beyond exchange and into execution, carrying responsibility across steps while keeping the interaction human and coherent.

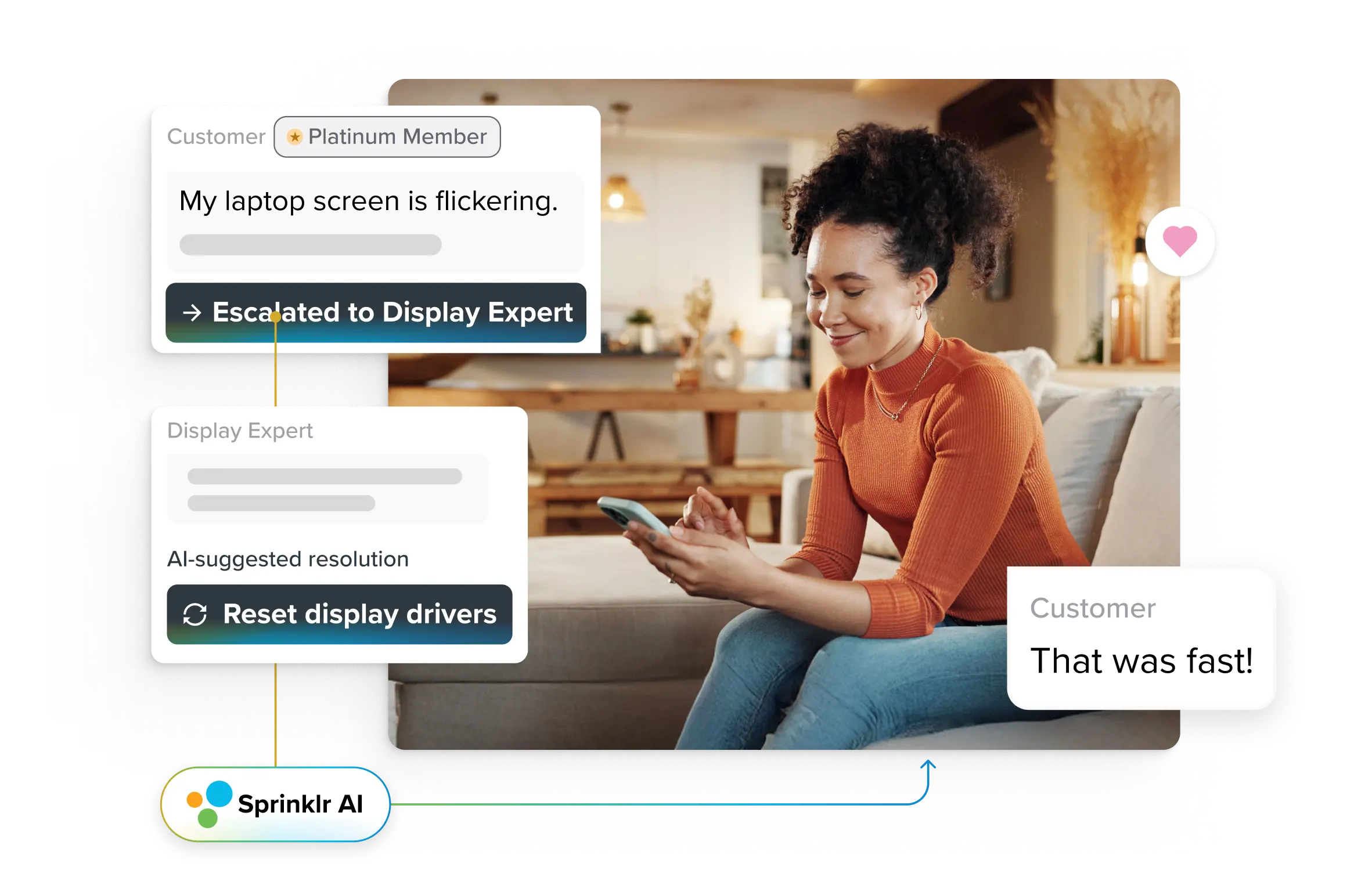

Support: handling complex issues at scale

A customer reports an issue that sounds simple on the surface: something didn’t work. A traditional chatbot would ask for keywords, surface an article, and wait. A modern conversational agent behaves differently. It starts by understanding what kind of problem this might be, then actively steers the investigation.

It may check recent activity, look for known incidents, or ask one clarifying question that meaningfully narrows the path forward. If the issue maps to a known failure pattern, the agent can open a case, attach the right metadata, and keep the customer informed as resolution progresses. If the situation evolves, it adapts. When the loop closes, it confirms closure rather than disappearing mid-thread.

The value here is not speed alone. It is continuity. The conversation becomes the spine that connects diagnosis, action, and closure without forcing the customer to repeat themselves.

Operations: when status becomes a moving target

Operational questions rarely have static answers. “Where is my delivery?” or “Can this be cancelled?” changes meaning depending on timing, system state, and downstream dependencies.

A conversational agent operating in this space treats status as something to reason about, not just retrieve. It checks where a request sits in its lifecycle, evaluates what actions are still possible, and explains options in context. If cancellation is no longer possible, it explains why. If it is possible, it initiates the step and confirms the outcome.

What makes this agentic is not the API call. It’s the decision boundary. The system chooses when an action still makes sense, when to block it, and how to communicate that shift clearly. The conversation becomes a live representation of operational reality rather than a static lookup.

Engagement: nudges that feel timely, not intrusive

Engagement becomes meaningful only when timing and intent align. A conversational agent that exhibits agentic behavior does not broadcast reminders blindly. It watches for signals that suggest hesitation, abandonment, or uncertainty, then decides whether intervention helps or harms.

For example, instead of repeatedly prompting a user to complete a flow, the agent may wait, infer friction, and re-enter with a clarifying question or a contextual suggestion. If engagement resumes, it steps back. If it doesn’t, it may surface an alternate path or pause outreach entirely.

The outcome is not more messages. It is fewer, better-timed ones that feel responsive rather than reactive.

Banking: when trust depends on sequence, not speed

In banking flows like KYC updates or refunds, order matters. Certain checks must happen before others. Some actions are allowed only under specific conditions. A conversational agent operating here must reason about sequence and eligibility, not just intent.

It may request documents, validate completeness, confirm eligibility, and only then proceed to the next step. Each action depends on the state created by the previous one. The agent tracks progress, explains delays, and closes the loop once updates are complete.

What makes this agentic is not autonomy for its own sake, but controlled progression through regulated steps while keeping the user oriented at every moment.

Telecom: managing disruption without losing the customer

Outages create confusion before they create tickets. A conversational agent in telecom learns to recognize early signals, correlate them with known network events, and adjust its responses accordingly. Instead of asking redundant questions, it can acknowledge the issue, explain scope, and guide next steps.

If line actions are possible, it initiates them. If not, it communicates status and follows up when conditions change. The conversation stretches over time, but it remains coherent. That continuity is what keeps frustration from turning into churn.

Retail and travel: when plans change mid-journey

Returns, exchanges, rescheduling, and disruptions all share a trait: the original plan is no longer valid. Conversational agents handle these moments by reconstructing intent in motion.

In retail, this might mean checking order state, validating eligibility, initiating a return, and confirming next steps. In travel, it can involve reviewing itinerary constraints, offering alternatives, and confirming changes. The agent keeps track of decisions already made so the customer does not have to restate them.

Here, agentic behavior shows up as coordination. The system holds the thread together while the situation evolves.

Read More: Example of AI Agents Streamlining Workflows Across Teams

What makes a conversational agent effective?

Effectiveness in conversational agents is rarely about intelligence alone. It shows up in how safe, clear, and progressive the experience feels when someone is trying to get something done. The strongest systems share a few quiet traits that prevent confusion long before frustration sets in.

Clarity and brevity come first.

Effective agents do not overload users with options or explanations. They surface only what matters right now. Clear questions, short confirmations, and precise next steps reduce cognitive effort. When users understand what the agent needs and why, they stay engaged instead of abandoning the flow midway.

Next are progress indicators.

People need to feel movement. Simple signals like “checking eligibility,” “updating your request,” or “almost done” anchor the user in the journey. Without them, silence feels like failure. With them, even longer workflows feel manageable because progress is visible.

Then come error recovery paths.

No system gets everything right. Effective conversational agents acknowledge confusion, recover gracefully, and redirect without blame. They restate understanding, offer a correction, or ask a clarifying question instead of forcing users to start over. This single capability prevents most rage-pinging and repeat contacts.

Adaptive tone matters more than many teams expect.

A billing issue, an outage, and a password reset carry different emotional weight. Agents that adjust tone based on frustration, urgency, or uncertainty feel responsive rather than robotic. This emotional alignment often determines whether users trust the system or immediately demand a human.

Finally, intelligent escalation triggers protect the experience.

Effective agents know when to step aside. They escalate when confidence drops, risk rises, or the situation turns sensitive. Crucially, they pass context forward so users are not asked to repeat themselves.

Together, these elements reduce drop-offs, prevent escalation loops, and turn conversation into a steady path toward resolution rather than a test of patience.

What conversational agents still can’t do

For all their progress, conversational agents are not magic. Their limits matter just as much as their capabilities, especially in enterprise settings where trust is fragile and stakes are real.

They cannot fully replace human empathy in escalations.

When a situation turns emotionally charged, sensitive, or personal, language alone is not enough. Conversational agents can acknowledge frustration and respond with care, but they cannot replicate judgment, nuance, or reassurance in moments where people need to feel truly heard.

They also struggle with messy, multi-intent queries.

Humans often blend problems together, change direction mid-sentence, or introduce new goals halfway through a conversation. While agents can handle some ambiguity, highly tangled requests still require clarification or human intervention to avoid incorrect assumptions.

Conversational agents depend heavily on backend maturity.

If systems are fragmented, data is outdated, or workflows are unclear, the agent cannot compensate. It can only act on what the underlying environment makes possible.

They cannot override missing or unclear policies.

When rules conflict or are undefined, agents have no safe path forward. In these cases, stopping and escalating is a feature, not a failure.

Without solid guardrails, even capable agents can behave unpredictably. Guardrails define boundaries and prevent overreach.

Finally, they need clean, reliable data.

Poor data hygiene leads to incorrect actions, broken trust, and avoidable errors. These limitations are reminders that conversational agents work best as part of a well-designed system, not as standalone solutions.

The future of conversational agents: What’s coming in 2026 and beyond

The future of conversational agents is starting to hinge on two things: shared rules and reliable memory.

The first is standardization.

Right now, many agents can work well inside one product or one company’s stack, but the next phase is about making them work safely across systems, tools, and teams without constant custom wiring. That is why standards work is gaining ground. In February 2026, NIST launched its AI Agent Standards Initiative to focus on interoperability, security, and agent identity, which signals that the industry is moving toward common rules for how agents connect, act, and are authorized to act.

The second pressure point is memory.

As agents handle longer, messier journeys, they need to remember the right things, forget the wrong things, and keep context intact over time. That sounds basic, but it is still one of the field’s weak spots. Early 2026 research on long-horizon conversational context shows that agents still struggle when conversations depend on dense repositories, extended histories, and precise recall across many steps.

That is where expectations are heading next: agents will be judged less by how fluent they sound and more by whether their behavior can be traced, tested, and trusted across long-running work. NIST’s recent work on transcript analysis points in that direction too, treating agent logs as evidence for understanding why systems fail, not just whether they fail.

What this points to is a shift in what good looks like. It’s no longer enough for agents to act correctly in isolation. They need to operate within shared standards, retain context over time, and make decisions that can be traced and trusted across systems.

This is where Sprinklr AI Agents become relevant. They are built around two of the clearest demands shaping this category now.

One, the need for continuity: agents that can carry context across voice, chat, email, and social so the journey does not keep breaking and restarting.

And two, the need for control: agents that work inside existing workflows, respect business logic, and remain visible through guardrails, monitoring, usage logs, and feedback loops.

Frequently Asked Questions

Chatbots were built to respond. Conversational agents are built to progress. The difference shows up in how they handle context, ambiguity, and outcomes. Instead of matching keywords or walking users through rigid flows, conversational agents carry intent across turns, adapt when information changes, and guide interactions toward resolution. They don’t treat each message as a reset. They treat conversation as a continuous thread that connects questions, decisions, and actions into a single experience.

No, LLMs are only one part of the system. They provide language understanding and generation, but conversational agents also rely on orchestration logic, memory, policies, tools, and guardrails. Without those layers, an LLM can talk but cannot reliably act. In production environments, conversational agents are systems that use LLMs, not systems that are defined by them.

They appear wherever people naturally ask for help or take action. Inside apps, on websites, in messaging tools, and across support channels. You see them when tracking orders, rescheduling appointments, resolving issues, onboarding into products, or getting internal IT help. The best ones don’t announce themselves. They feel like a natural continuation of the journey, not a separate destination you have to find.

The safest starting point is workflows with clear rules, predictable outcomes, and low emotional risk. Things like status checks, account updates, appointment scheduling, password resets, or eligibility checks. These flows benefit from speed and consistency while still allowing clean escalation when something unexpected appears. Starting here builds trust before expanding into more complex scenarios.

Escalation should be driven by confidence, risk, and emotion. If the agent lacks enough information, encounters policy ambiguity, detects repeated confusion, or senses rising frustration, it should step aside. The key is timing and context. Effective escalation passes the full conversation history forward so the human can continue seamlessly, not start over.